Closed Captions vs Subtitles

Intro

Those making or just simply watching videos might wonder: what are the differences between closed captions vs subtitles? Which one should you go for?

For novices, this battle might be a real headache, but don’t worry. We’ve got your back with a simple breakdown!

What Are Closed Captions And How Do They Work?

This is no stranger for all of us. The spoken portion of a television, movie, or computer presentation is presented in text form in closed captions.

Closed captioning is effective when audio is unavailable, such as in an airport or another setting where silence is required, like a hospital.

Closed captions are applied to the video stream by encoding to make the text visible. On most modern televisions, you can toggle closed captioning on or off via an on-screen menu.

Most shows are captioned before they are broadcast. However, some programs, including live news broadcasts, necessitate real-time captioning due to their nature.

A stenographer or captioning service must first listen to the broadcast and write a shorthand transcription into a program. It will then translate the shorthand into a transcription of the captions and add that information to the television signal.

Besides the closed type, you must have heard about open captions. So, open vs closed captions - what makes them differ?

Open captions are permanently integrated into the video and cannot be disabled. Since they reduce the options for viewers, open captions are often only utilized where deaf and hard-of-hearing audiences would ordinarily miss all the dialogue.

Both closed and open captions are available for some movies, allowing viewers to select the style of captioning they prefer.

What Are Subtitles?

Video subtitles (or translations) are translated parts of speech devoid of sound effects. They are designed for listeners who can interpret audio but not language.

Although closed captions and subtitles serve different purposes, they are both always timed to the media and, for the most part, allow viewers to switch them on or off.

What Is The Difference Between Subtitles And Closed Captions?

Closed captions are text translations of spoken words and other non-speech features in the video stream.

Because they distinguish between various speakers, present background noises, and provide additional pertinent information, subtitles are helpful to those who face trouble understanding the language. In contrast, captions are meant for deaf or hard-of-hearing viewers.

Translations of the spoken audio are called subtitles, usually displayed at the bottom of the screen. At the same time, people who watch movies or TV shows with subtitles typically do so because they cannot understand the language.

Movies, for instance, include subtitles in the language used in the nations where they are released. In a Chinese movie that premieres in foreign countries, the Mandarin spoken in the movie is translated into English for the subtitles.

Another case in point, if you are viewing a French movie and speak English, the translations at the bottom of the screen are subtitles. Usually, subtitles are created before a movie or TV show is released.

Which One Should I Use: Closed Captions Vs Subtitles?

This will depend on your goals. Even if a viewer cannot speak the language, they can still watch videos thanks to subtitles.

Many video producers recognize the value of adding subtitles and make their content accessible to more countries for the expansion of international video platforms. Otherwise, it’s your choice to turn off subtitles so that you can learn that forein language.

In the same vein, as more people view videos on their phones while the sound is muted in public spaces, the use of closed captions has increased along with mobile video consumption.

Should Every Video Include Closed Captions Or Subtitles?

All marketing videos should contain captions or subtitles for user preference and accessibility.

Adding captions or subtitles is relatively cheap and easy. Meanwhile, the impact is more significant as you can reach more audiences with one video.

How To Create Subtitles And Closed Captions Effectively?

The Problems With Manual Captioning/Subtitling

Captioning/subtitle making usually takes a lot of time if done manually. Human captioning/subtitling is an arduous task and is prone to mistakes.

In the process, you may encounter language differences that are out of your range of knowledge. Fortunately, more advanced technology can alleviate video editors' massive, time-consuming burden. They can also help solve three significant problems: Rigorous tasks, error-prone transcribing, and minor language coverage.

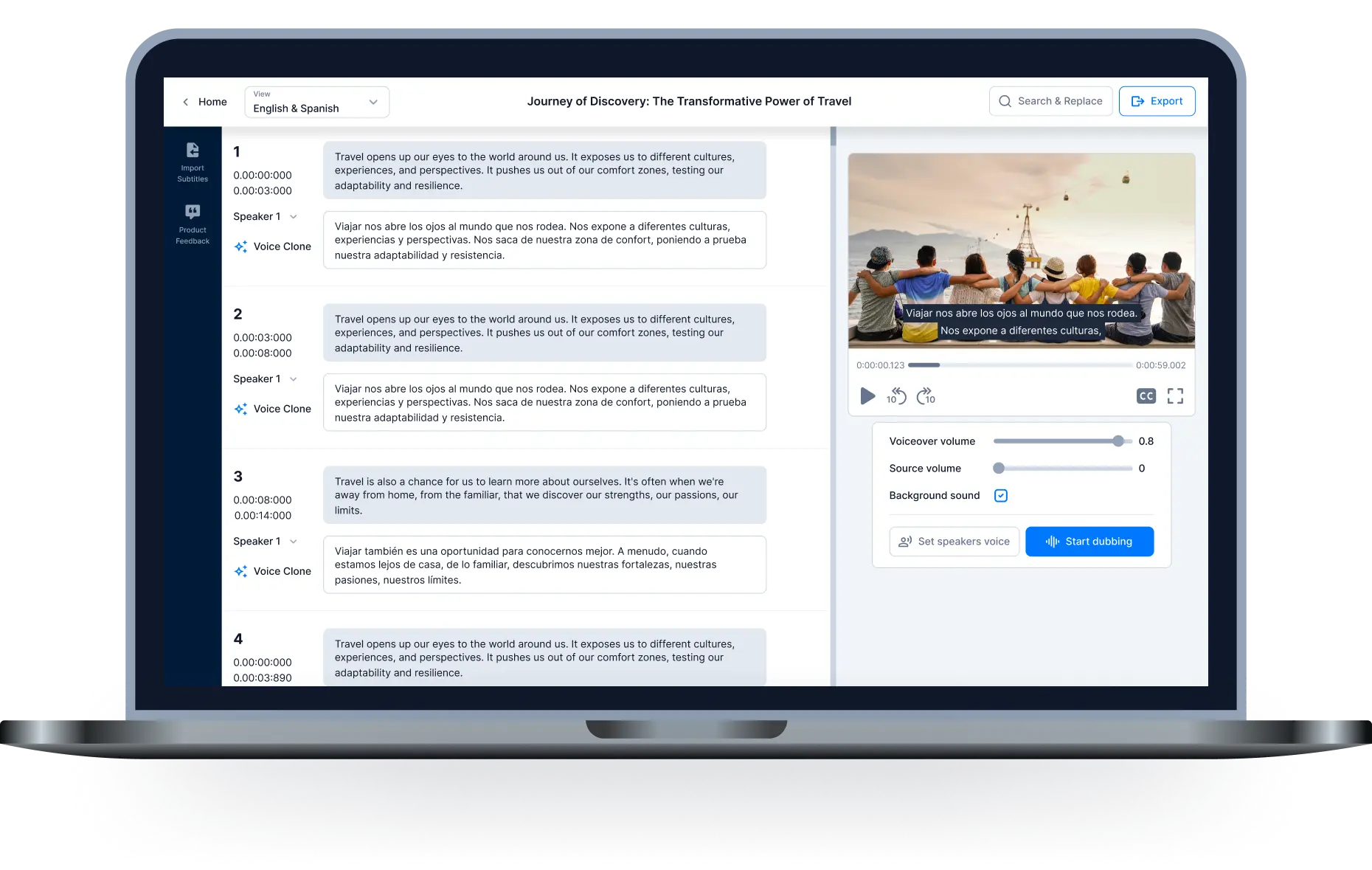

Solutions: Hei.io

Hei.io is a tool to create captions, subtitles, and voice-over by AI, available in 70 languages and 250 voices. This is indeed an industry-leading number, as not many platforms deliver such a wide range. Extra captions, translated subtitles, background music, and more are extremely handy for video editors no matter what language you're working in.

Within clicks, you can let Hei.io work on the necessary language output. The rest is just editing, which lifts off a massive burden on the transcription process.

How To Use Hei.io: Step-By-Step Instructions

Step 1: Add files and choose target languages.

Link to your video or upload directly from your computer.

Pick the language initially used in the video and the target translation language.

Step 2: Let the machine do the hard work.

Press Run. Soon, voice-over, translations, and captions will all be automatically generated.

The processing time is determined by the video's frame rate.

Step 3: Video download, editing, and export.

Click Download Video to finish or Edit video to make changes once it's finished.

Choose from a variety of tools that can be customized. When finished, select "Export".

Conclusion

Understanding the difference between closed captions vs. subtitles is crucial at the beginning of one's transcription job. There are many ways you can generate subtitles and closed captions for your videos. However, you should aim at intelligent tools to reduce the heavy work of a transcriber.

In a world of competing priorities, AI tools like this can significantly serve your productivity and work quality. Hei.io offers a cost-effective solution to produce precise captioning, subtitling, and voice dubbing in a short amount of time.

Sign up now at Hei.io - AI Dubbing!

Relative Post:

Translate Italian Video to English

How to overdub a perfect video?

All-in-one video editor tool

The easiest, most powerful subtitle and voice-over video editor. Loved and trusted by content creators and video agencies of 100+ brands to reach and engage with audiences better.